Building a LLM application:

- Jun 4, 2025

- 2 min read

Couple of weeks ago, I attended a mini AI conference & earned this insightful sharing from a LLMOps senior manager which brings in a more comprehensive picture of LLM application lifecycle & helps me significantly in reflecting on our own practice. Just wanted to share here for anyone interests.

1. Define the Objective: Clearly articulate the problem your application aims to solve, MVP / POC scope.

2. Data Ingestion & Preparation:

- Structured Data: Store in Unity Catalog or SQL Server.

- Unstructured Data: Store in ADLS.

- Data Versioning: Utilize Git for code and data.

- Data Cleaning & Transformation: Prepare data for analysis.

- Data Indexing & Storage: Azure AI Search: For efficient search & Chromadb: For embedding and vector storage.

- RAG Data Versioning: Employ MLflow and Promptflow.

3. LLM Model Selection:

- Model Architecture & Data: Consider factors like model size, training data, and fine-tuning approach.

- Experimentation: Test different models and configurations to optimize cost, performance, and latency.

4. Prompt Engineering:

- Crafting Effective Prompts: Write diverse, relevant, structured, and natural language prompts.

- Leveraging Frameworks: Utilize OpenAI, Langchain, or LlamaIndex for prompt templates.

- Prompt Versioning: Employ Promptflow and MLflow.

5. LLM Evaluation:

- Robust Evaluation: Establish strong evaluation metrics and a comprehensive test dataset.

- Human-in-the-Loop: Involve human evaluation for ground truth.

- Ethical Considerations: Prioritize ethical implications in evaluation.

- RAG Application Evaluation: Utilize LlamaIndex evaluations and MLFlow.

6. Fine-Tuning (Optional): applicable when LLM results are not good enough.

- Model Selection & Data Preparation: Choose the right pre-trained model and data.

- Transfer Learning & Regularization: Apply techniques to enhance model performance.

- Iterative Fine-Tuning: Refine the model through multiple iterations.

7. LLM Implementation & Deployment:

- Microservices Architecture: Design REST API-based microservices.

- Deployment Platforms: Utilize Azure OpenAI and Databricks.

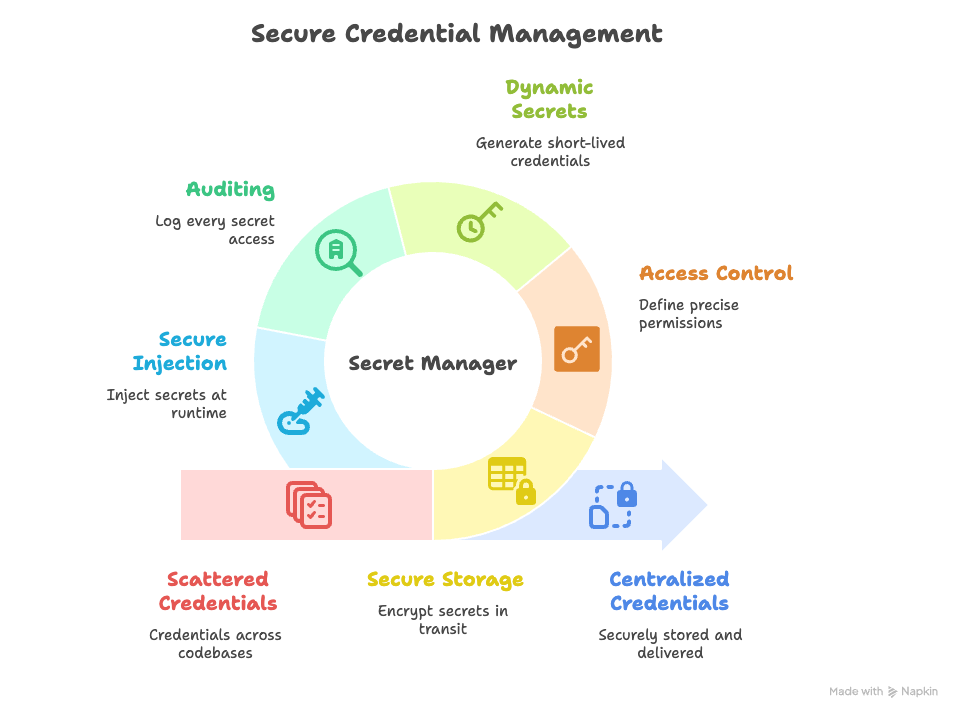

- Security & Governance: Implement robust security measures.

8. LLM Monitoring:

- Performance Tracking: Monitor key metrics like correctness, relevance, toxicity, latency, etc.

- Tooling: Employ MLFlow or Phoenix for monitoring.

- Prompt Response Debugging: Analyze prompt responses and performance against baselines.